Robots & Sitemap Inspector

Decode your crawler rules. Ensure your sitemap is visible and you aren't accidentally blocking traffic.

What are robots.txt and sitemaps?

Robots.txt is a gatekeeper file that tells search engine bots where they are allowed to go. Sitemaps are maps that tell bots where to find your content. Together, they control how your site is indexed.

Why use this inspector?

- Prevent Accidents: Ensure you haven't accidentally blocked Google or AI bots.

- Verify Visibility: Confirm your sitemap is submitted and accessible to crawlers.

How this Robots & Sitemap Inspector Works

This tool fetches your `robots.txt` file and parses it against the rules of major AI crawlers (GPTBot, CCBot, Google-Extended). It checks for "Disallow" directives that might be blocking specific bots.

- Syntax Validation: We ensure your rules follow the standard exclusion protocol.

- Bot Specific Checks: We specifically look for `User-agent: GPTBot` and `User-agent: CCBot` blocks.

- Sitemap Reachability: We check if your sitemap URL referenced in robots.txt is actually reachable (200 OK).

Why it Matters for GEO

Accidental Blocking

Many sites copy-paste old robots.txt files that block "all bots" or use wildcard Disallows that inadvertently catch useful AI crawlers.

Crawl Budget

If your robots.txt is messy or your sitemap is broken, crawlers waste time (and budget) trying to navigate your site, leading to poorer indexation.

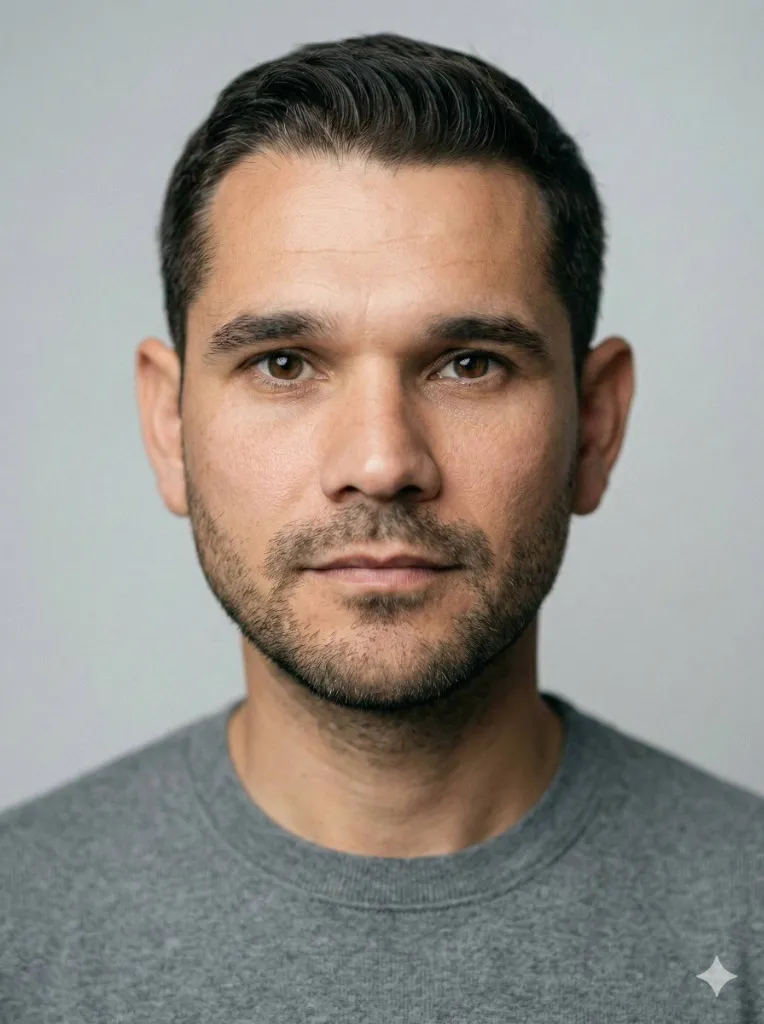

Davide Agostini

Android Mobile Engineer and Founder of ViaMetric. Davide specializes in technical SEO and the emerging field of Generative Engine Optimization (GEO), helping founders navigate the shift from links to AI citations.

Frequently Asked Questions

GPTBot is OpenAI's web crawler. Allowing it ensures your content can be indexed for ChatGPT Search. Blocking it means you opt-out of ChatGPT traffic.

This means the file doesn't exist at the URL you provided. Check if it's named `sitemap.xml` or `sitemap_index.xml` and located at the root of your domain.

Yes. You can use specific User-agent rules. For example: `User-agent: Googlebot Allow: /` and `User-agent: GPTBot Disallow: /`.